Photoshop Is Being Eaten by the Prompt Box: The New Face of AI Image Editing

After a recent trip, I faced a familiar pile of photos needing cleanup. A stray object here, an awkward background detail there. My first instinct was Photoshop, but the full subscription feels steep for someone who isn’t a pro. Mobile apps? My thumbs are too clumsy for precision taps.

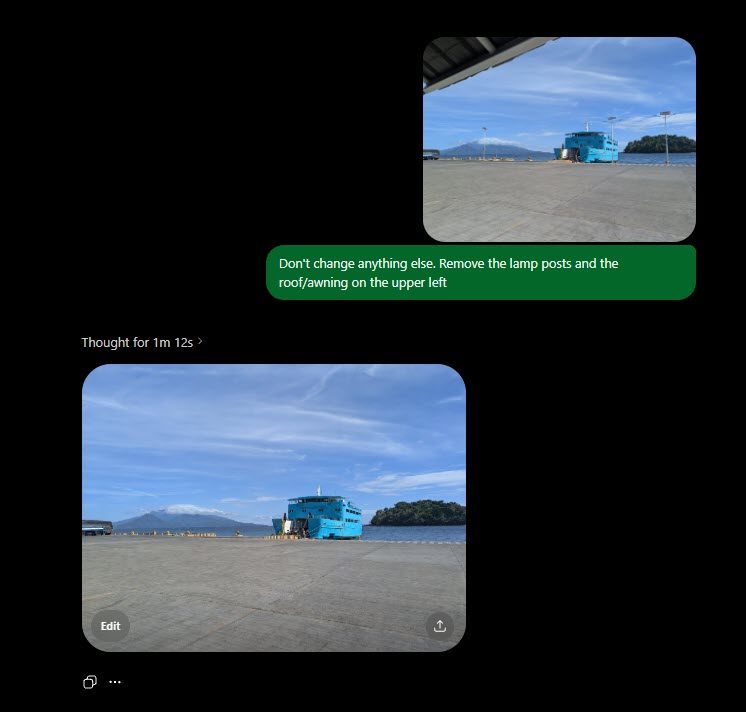

So I turned to the obvious alternative: AI image editing. Every tech company seems convinced the prompt box is the future. Why not describe the edit and let the machine handle it? Sometimes it worked beautifully. Other times, it felt like a polite argument with software that kept misunderstanding simple requests. This experience revealed that AI image editing is evolving fast—but not necessarily getting simpler.

Why Every Editor Wants to Become a Chat Box

The appeal is clear. Most people never wanted to become Photoshop monks, memorizing layers, masks, and blend modes. They just wanted to erase a person, fix a crooked shot, or generate a decent graphic without a tutorial. The prompt box skips the ceremony. It doesn’t ask if you know what a layer mask is. It asks for a result.

Companies like Adobe are embedding Firefly deeper into Photoshop, while Canva offers a buffet of “Magic” buttons. Google‘s Gemini, ChatGPT image generation, Midjourney, Ideogram, and Runway all circle the same idea: editing should feel like asking for help, not operating complex software. This shift makes conversational photo editing a growing trend.

For casual users, this is liberation. A 20-second prompt can achieve what once required patience or a friend who owed you a favor. The old barrier was technical; the new one is fuzzier: knowing what looks right, what looks fake, and where the machine decided to improvise.

When Editing Becomes Negotiation

However, asking for help isn’t the same as getting help. Anyone who has used AI photo tools for more than five minutes knows the dip when a result is almost right—but somehow more annoying. The person is removed, but the background looks like melted wallpaper. The lighting improves, but the photo now resembles a luxury dentist ad. The object moves, but the AI adds a mysterious extra finger.

This is where editing becomes negotiation. You’re not just editing the image; you’re editing the request. “Make it warmer, but don’t make it fake. Remove that object, but keep the background natural.” Old tools were annoying because they made you learn rules. Prompt-based editing is annoying because it pretends language is enough—which is generous nonsense. Language is mushy, visual judgment is slippery, and AI models can be confidently wrong.

The Reality of Iterative Edits

The first result is often the best sales pitch. It looks shockingly good at a glance. Then you ask for corrections: fix the lighting, restore detail, reduce waxy skin. After a few rounds, the image drifts. Details soften, faces turn into blobs, and the clean edit becomes less impressive the harder you try to fix it.

For professionals, this can be useful but not relaxing. Boring work gets faster, but supervision gets heavier. Someone must catch flattened images, broken compositions, or softened details before anyone else sees them. The job shifts from doing to directing—which sounds clean until the AI gives everyone porcelain skin.

The Future of Image Editing

For casual users, the interface gets friendlier and power gets closer. But the frustration gets harder to name. When a traditional editor annoyed you, at least the villain had buttons. When an AI editor misinterprets a reasonable request, it feels like a conversation going badly.

Photoshop will survive. Powerful tools usually do. But its old logic is being absorbed into a simpler, stranger interface. The future of editing may not be learning where the tools are—it may be learning how to talk to a machine that keeps pretending it understood you.

Building on this, the key is to embrace AI image editing while staying critical. Use prompts as a starting point, not a final answer. Always check for AI hallucinations like extra fingers or weird textures. For more insights, check our guide on comparing top AI photo tools and prompt engineering tips.

Ultimately, the prompt box is eating Photoshop’s lunch—but the meal isn’t fully cooked yet. Editors who adapt will thrive, but they’ll need to sharpen both their visual eye and their conversational skills.

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

Social Media3 months ago

Social Media3 months ago

Infosecurity3 months ago

Infosecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

How To2 months ago

How To2 months ago