I Let Gemini Take Over My Gmail—Here’s What Happened

My inbox used to feel like a black hole. Between meeting invites, marketing pitches, product PR, and urgent updates, the noise was deafening. There were days I avoided opening emails altogether, paralyzed by the fear of missing something critical buried in the clutter. That’s when I decided to put Gemini in Gmail to the test—and the results were eye-opening.

How Gemini Transforms Email Overload

Having an AI assistant built directly into my inbox felt like a safety net. Instead of drowning in a sea of messages, Gemini cut through the clutter, helping me stay on top of what mattered most. It didn’t just organize—it prioritized.

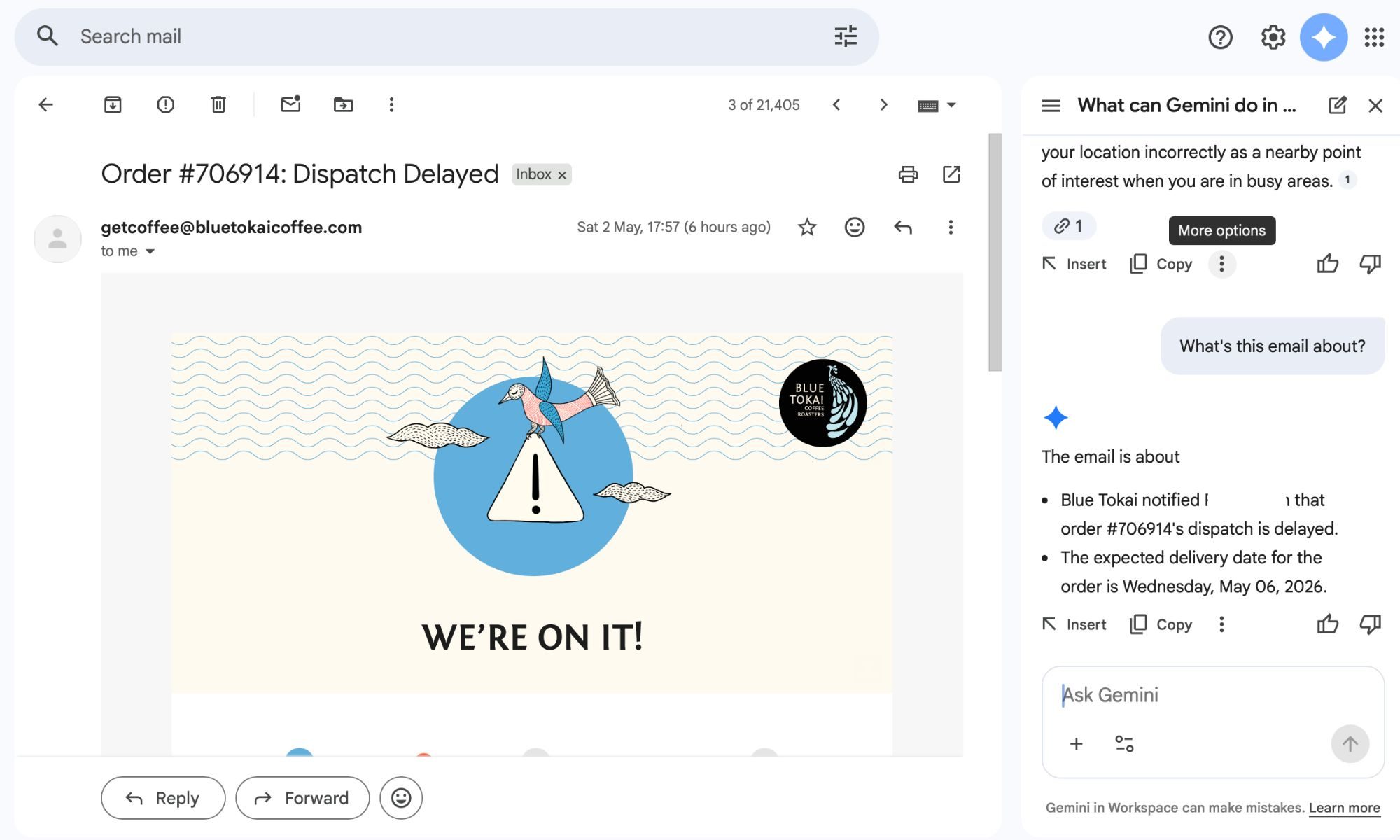

Building on this, I started using Gemini to summarize lengthy marketing emails. These messages often contain timelines, embargo details, and launch notes that are easy to skim past. Gemini highlighted key dates and flagged crucial information, turning dense blocks of text into clear, actionable points.

Accuracy That Builds Trust

At first, I double-checked every summary. But over time, Gemini consistently got it right. It caught details I might have missed, like meeting mentions, and even helped turn them into calendar reminders with pre-filled details. On a busy day, that small automation made a big difference.

Yes, you could do all this manually. But when your plate is full, reading and decoding long emails feels exhausting. Gemini handles that first pass, freeing me to focus on work that actually needs my attention.

Writing Replies Without the Grind

The next challenge was replying to endless email threads—five people CC’d, replies stacked on replies, and one critical action item hidden inside. That used to eat up my time. Now, Gemini handles the groundwork.

My workflow is simple: I ask Gemini to summarize the thread, then request a suggested reply. For a product PR email with embargo details, it might draft a response acknowledging the pitch and asking for review units. For a meeting thread, it can confirm attendance or request a reschedule.

What’s interesting is that I rarely send those replies as-is. I tweak the tone, add my opinion, or adjust for the recipient. But the base is solid. The suggestions sound natural—sometimes even witty—and no one can tell AI had a hand in it. If I don’t like the first draft, I ask for alternatives. It’s like having options laid out, removing the repetitive parts of communication.

Connecting the Dots Across Apps

Beyond email, Gemini excels at cross-referencing data. It pulls context from older threads, digs into Google Drive files, and checks my Calendar. For example, if I vaguely remember a media kit from weeks ago, I just ask Gemini. It finds the email, retrieves the attachment, and delivers it.

Similarly, if I’m unsure about a scheduled briefing, Gemini cross-checks my Calendar and confirms the details without me hopping between apps. This seamless integration saves me from constantly switching tabs or searching keywords manually.

Privacy Concerns vs. Productivity Gains

The biggest hesitation was privacy. Letting an AI into your inbox isn’t trivial—emails hold conversations, work details, and plans. I still think about it. But I’ve come to terms with how much of our lives already exist online. That doesn’t mean privacy stops mattering, but it shifts the balance between convenience and control.

For me, the choice was clear: either hold back and keep doing everything manually, or lean into tools that lighten the load. Right now, I value my time more. Since adopting Gemini, my relationship with my inbox has changed. It feels manageable. I’m not drowning or second-guessing what I missed. I’m just getting through it without overthinking every step.

In hindsight, I’m glad I didn’t let hesitation stop me. Sometimes, trying something out tells you more than thinking about it ever will. For more insights, check out our guide on AI productivity tools or explore Google Workspace features.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago