OpenAI Codex Chrome Extension: AI Agent Moves Into Your Browser for Real Work

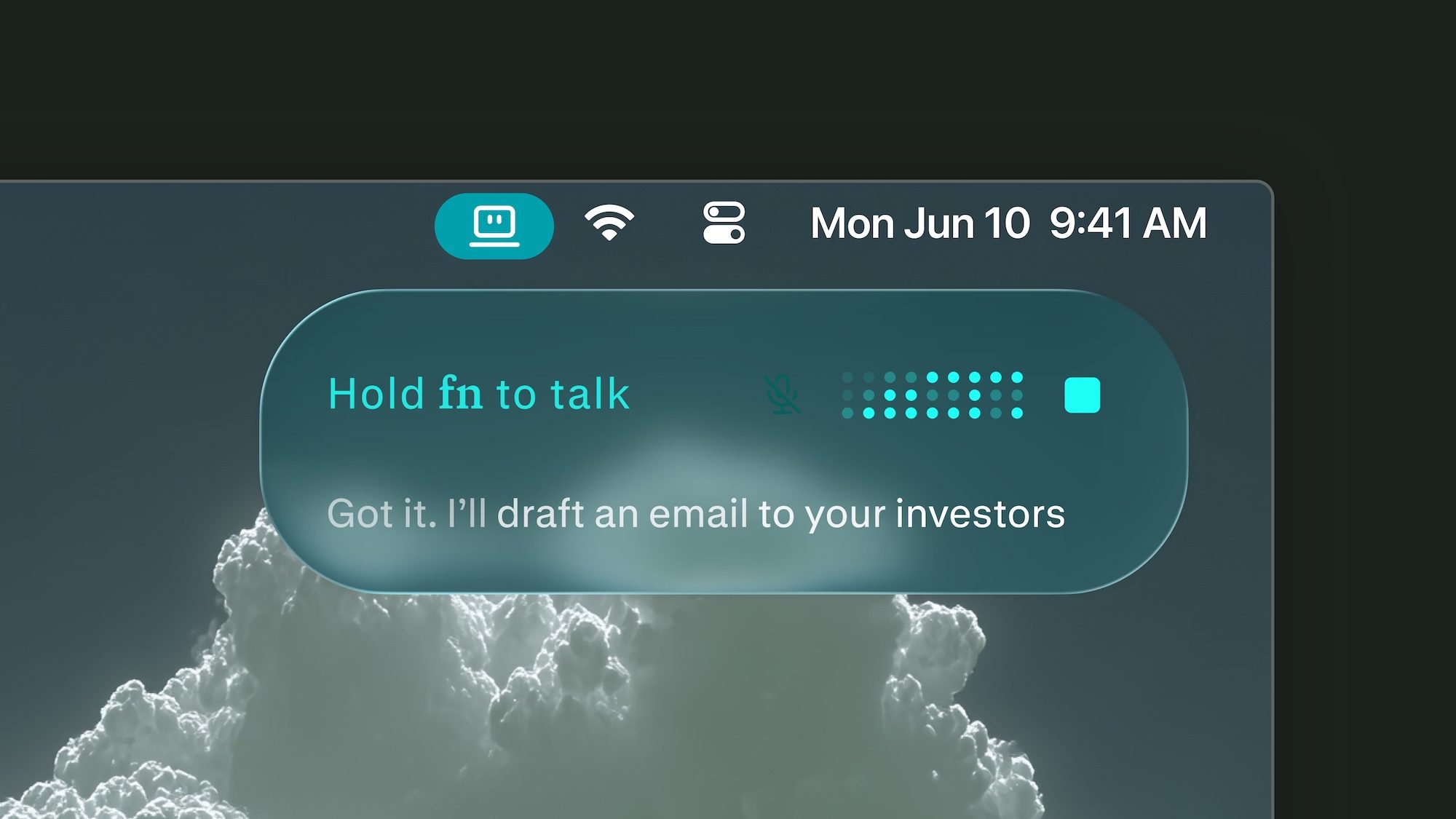

OpenAI has taken a significant step forward with its OpenAI Codex Chrome extension, pushing the AI agent beyond the developer sandbox and into the everyday web applications where professionals spend most of their time. This move transforms Codex from a coding assistant into a browser-based agent capable of interacting with authenticated sessions in Gmail, Salesforce, LinkedIn, internal dashboards, and more.

As a result, the AI agent Chrome extension can now help with research, CRM updates, dashboard checks, and browser-based debugging—tasks that often get stuck across multiple tabs. However, with this expanded access comes a new set of security considerations that users must navigate carefully.

What the OpenAI Codex Chrome Extension Unlocks

The most impressive aspect of this update is the contextual awareness Codex can carry into web apps. Instead of starting from a blank prompt, the agent operates where someone is already logged in, making it far more practical for private dashboards, forms, and account-based tools. This means users can delegate repetitive browser tasks without constantly re-authenticating or copying data between windows.

Building on this capability, the Codex browser automation feature allows the agent to follow complex workflows across multiple sites. For instance, it can extract data from a dashboard, populate a CRM entry, and then send a summary email—all within the same authenticated session. This level of integration is a major leap forward for productivity tools.

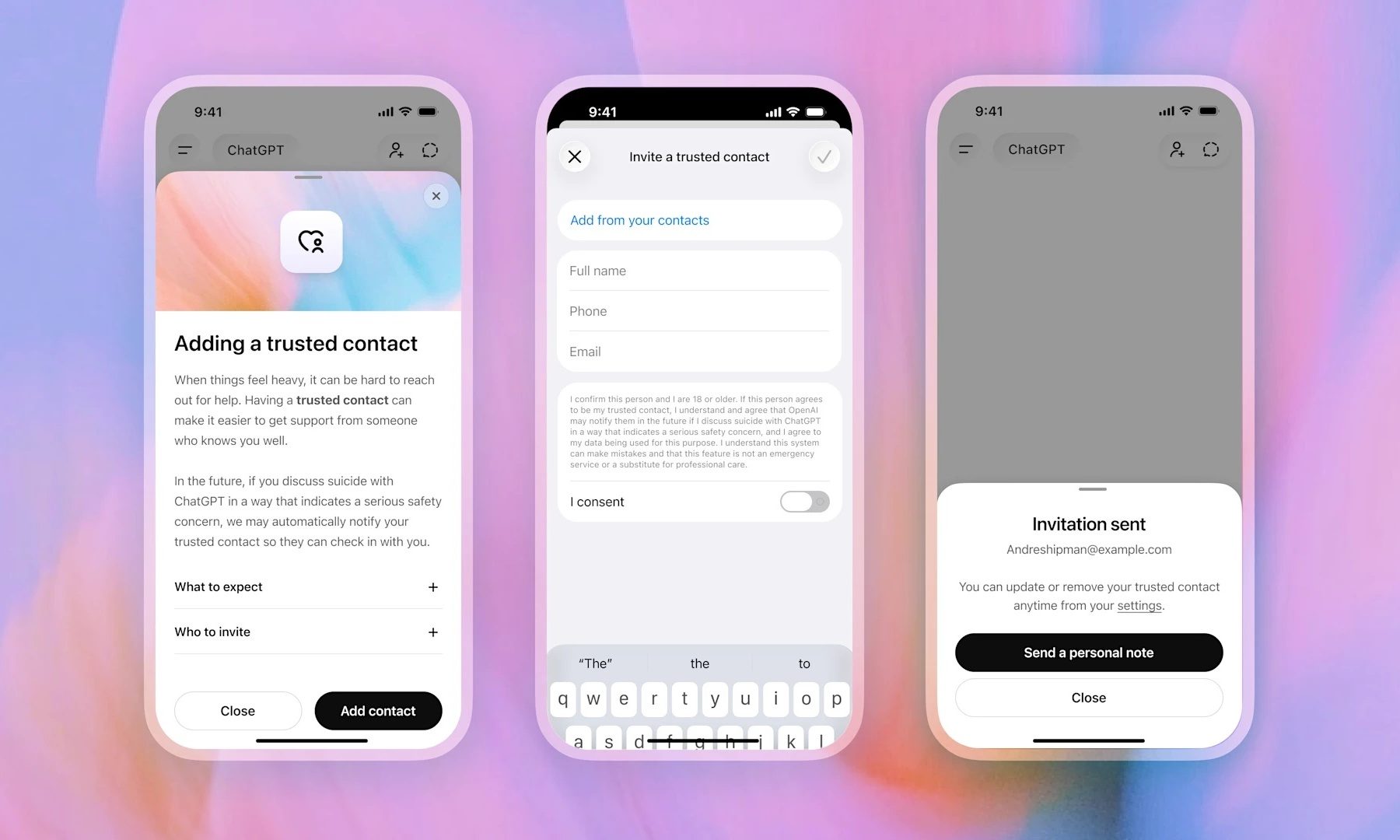

Nevertheless, this access also makes the extension more sensitive than a routine product update. Agentic AI raises security concerns when autonomy, tool use, and external access come together, because each added capability gives the system more room to make a bad call or follow a bad instruction.

How Much Access Is Too Much for an AI Agent?

Codex can now follow a task through the web, use browser context, and return results for review. OpenAI states that the extension does not take over the active browsing session, which keeps the user closer to the work rather than handing over the entire tab. This design choice aims to balance utility with control.

However, the risk comes from what that autonomy can touch. A system that can read a dashboard, fill out a form, or interact with an internal tool needs stronger review habits than a chatbot answering questions in a separate window. As OpenAI rolls out the extension in all regions except the EU and UK, where support is still pending, the question of permissions becomes critical.

Therefore, users should consider the OpenAI agentic AI risks before granting broad access. The same autonomy that makes Codex powerful also creates potential for errors, especially if the agent misinterprets a command or encounters an unexpected page layout.

Where Caution Pays Off With Browser-Based AI

The next test for OpenAI is whether it can make Codex’s browser work feel controlled rather than merely impressive. Site approvals, permission settings, and review steps will decide whether the extension feels like a productivity boost or a shortcut with too much reach.

For early adopters, the practical move is to start small. Give Codex access to the few sites where the benefit is obvious, avoid sensitive accounts until the workflow proves itself, and review what it does before letting the agent handle higher-stakes work. This approach aligns with best practices for AI web automation safety.

Additionally, integrating Codex with other productivity tools can enhance its value. For example, using it alongside project management platforms or analytics dashboards can streamline reporting tasks. However, users should remain vigilant about data privacy and ensure that sensitive information is not exposed unnecessarily.

In conclusion, the OpenAI Codex Chrome extension represents a meaningful evolution in AI agent capabilities. It brings the promise of automated browser tasks closer to reality, but only if users implement it with clear boundaries and regular oversight. As the technology matures, the balance between utility and security will define its long-term success.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago