Your ChatGPT history is a personality test you didn’t know you were taking

Every time you ask ChatGPT to draft an email, vent about a relationship problem, or look up symptoms, you might be handing over more than just a query. Researchers at ETH Zurich have trained an AI model to predict personality traits directly from real ChatGPT conversation logs, and it was scarily good at recognizing personality traits. This breakthrough raises serious questions about privacy, data ethics, and how companies might use your digital footprint.

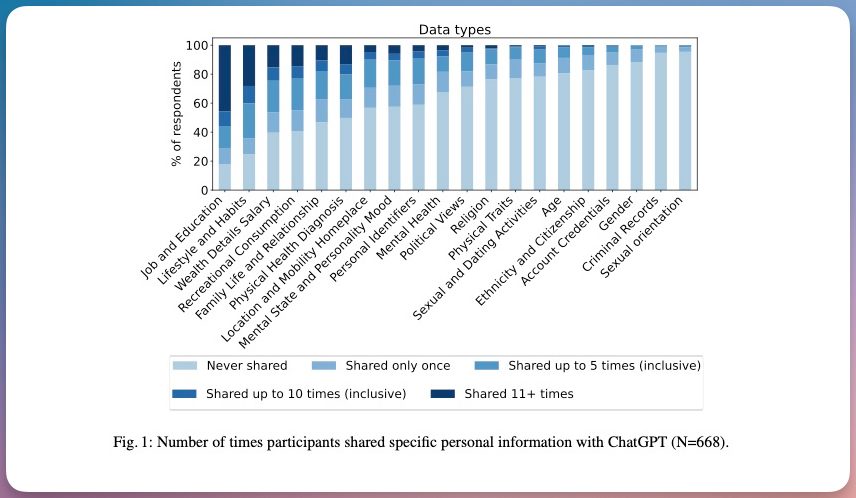

As reported by TechXplore, the study collected 62,090 real conversations from 668 ChatGPT users. Participants also completed a standard personality test, giving the researchers a baseline to measure against. The AI was then trained to classify each user as low, medium, or high across the five traits: openness, conscientiousness, extraversion, agreeableness, and neuroticism. The fine-tuned model beat random chance across all five traits, with extraversion being the easiest to predict, achieving up to 44% higher accuracy than guessing.

Does it matter what you talk about with ChatGPT?

Absolutely. The study found that chats involving mental health topics made extraversion particularly easy to infer. Discussions about religion were strongly linked to conscientiousness inference, and conversations about mental state and mood made openness more predictable. Even seemingly casual conversations contained enough signal to be useful. The researchers also found that the more you use ChatGPT, the easier you become to profile.

This means that your everyday questions—from asking for cooking recipes to complaining about work—are not as neutral as they seem. Each exchange adds a small piece to a larger puzzle that AI can assemble into a detailed personality portrait. Given how much data we share with ChatGPT, it matters a lot whether it can easily discern our personality traits.

Why does ChatGPT personality prediction matter beyond the research lab?

The researchers are clear about the implications. Service providers already have access to all of this data, and with over 800 million monthly ChatGPT users as of January 2026, the scale of potential profiling is enormous. A personality profile built from your chat history could be used for targeted advertising, personalized persuasion, or in worst-case scenarios, large-scale influence campaigns.

Recently, ChatGPT has started integrating ads. With the data it has on hands for us, think how easily it can format the ads to manipulate our thinking. For instance, if the AI knows you are high in neuroticism, it might show you ads for anxiety relief products or financial planning services that prey on your fears. Similarly, an extraverted user might see ads for social events or networking tools.

How accurate is the personality profiling?

The study’s accuracy is impressive but not flawless. Extraversion was the easiest trait to predict, achieving up to 44% higher accuracy than random guessing. However, other traits like openness and agreeableness were more challenging. Still, as AI models improve, so will their ability to read us. The researchers note that even a modest improvement in prediction accuracy can have significant real-world consequences when applied to hundreds of millions of users.

What can you do to protect your privacy?

For now, it is worth remembering that your AI chatbot is not a diary. At least not a private one. You can also take a proactive approach and delete your ChatGPT history regularly to remove your personal chats from its memory. This simple habit can significantly reduce the amount of data available for profiling.

Additionally, consider reviewing your privacy settings on all AI platforms. Many services allow you to opt out of data collection for training purposes. You might also want to avoid sharing highly sensitive personal information—like mental health struggles or financial details—in conversations with chatbots. If you need advice on such topics, consult a human professional instead.

Building on this, it’s wise to treat every chatbot interaction as potentially public. Think before you type: would you be comfortable if this conversation appeared on a billboard? If not, it’s probably best not to share it with an AI.

The bigger picture: AI and the future of targeted advertising

This research highlights a growing trend: the convergence of AI and psychology for commercial gain. Companies like Google, Meta, and OpenAI are sitting on vast troves of conversational data. With the right algorithms, they can turn that data into detailed psychological profiles for hyper-targeted advertising.

However, there are also ethical implications. If AI can predict your personality from chat logs, it could be used to manipulate your decisions—from what you buy to how you vote. Regulators are beginning to take notice, but the technology is moving faster than the law. As a result, individual vigilance is currently the best defense.

In conclusion, your ChatGPT history is more than a log of queries—it’s a window into your personality. While the technology is fascinating, it also demands caution. Stay informed, protect your data, and remember: in the digital age, your words have power beyond what you imagine.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago