I Built a Mac App to Track My Bad Posture with AirPods — Without Writing a Single Line of Code

Imagine wanting a custom app for a nagging problem, but you have zero coding experience. That was my reality a few weeks ago. I was tired of slouching at my desk, and existing solutions felt invasive or clunky. So, I decided to build a Mac app with AI that uses my AirPods’ motion sensors to detect bad posture. The best part? I never wrote a single line of code. I just talked to an AI chatbot, and it did all the heavy lifting.

This journey started with a simple idea: use the motion sensors inside AirPods to monitor posture changes, without relying on a webcam. I wanted something private, efficient, and personal. After experimenting with Claude from Anthropic, I realized that the barrier to creating functional software has crumbled. Now, anyone can build a Mac app with AI by describing their needs in plain English.

Why Move Away from Camera-Based Posture Tracking?

Earlier, I tested an open-source app that used my Mac’s webcam to detect slouching. It worked, but it raised serious privacy concerns. Every time the camera activated, I wondered: Is someone watching? Is my data being uploaded to a server? The app processed everything locally, but the unease remained. Many users shared similar fears on Reddit, questioning data storage and potential backdoors.

This pushed me to find an alternative. Instead of using a camera, why not tap into the motion sensors already in my AirPods Pro? These sensors track head movement and orientation. If I could calibrate good and bad postures, the AirPods could alert me when I slouch. The challenge was building the software — but I had no coding skills. That’s when I turned to Claude AI.

How I Built a Mac App with AI in Under an Hour

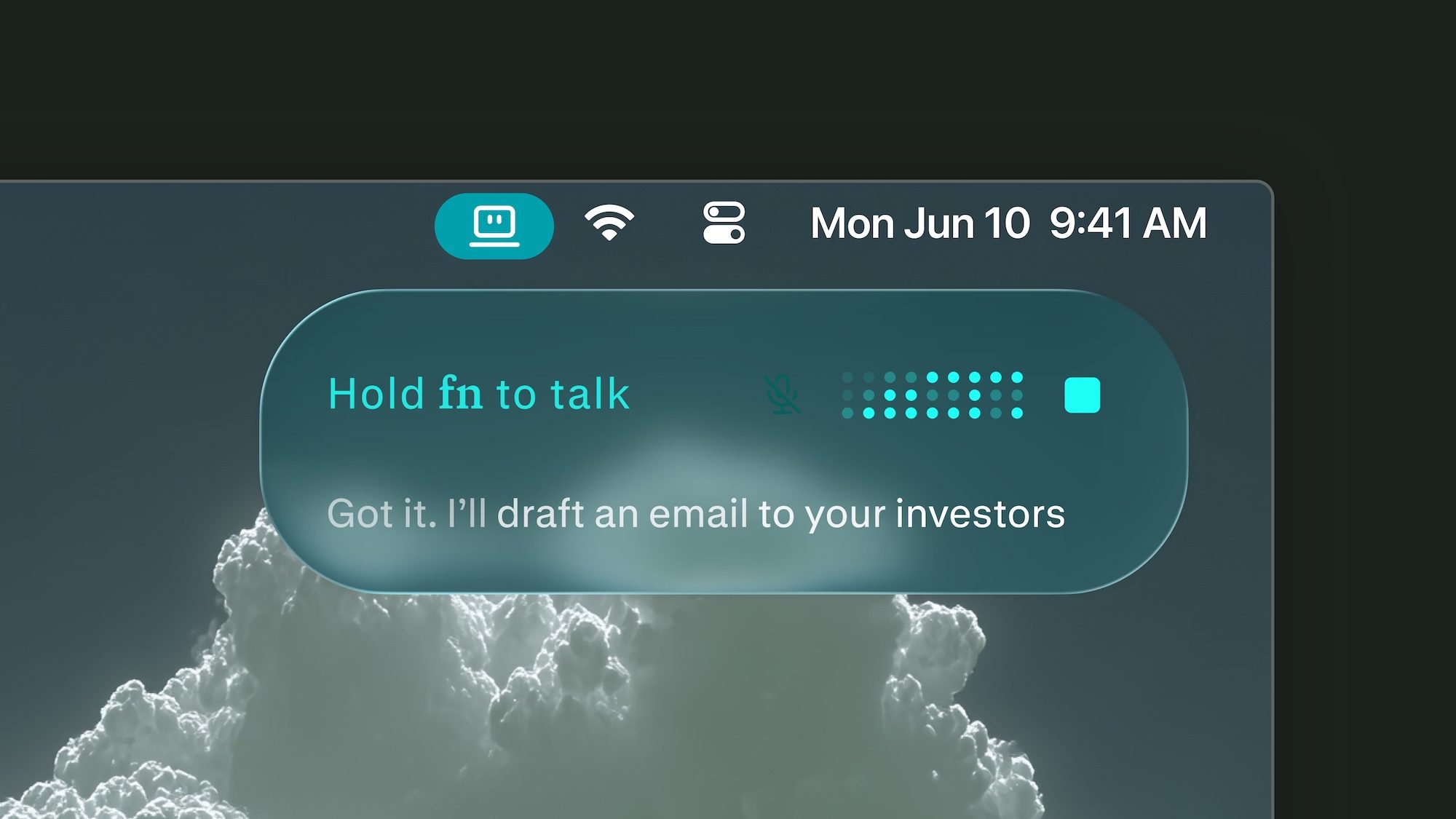

I opened Claude and typed: “I want to build a Mac app that uses AirPods motion sensors to detect bad posture and send notifications.” The AI asked a few clarifying questions — like whether I wanted a menu bar utility or a full-window app. I replied with simple yes/no answers. Within 30 minutes, Claude generated the entire codebase, including a menu bar icon, notification banners, calibration controls, and a two-stage warning system.

Claude even designed the app icon and saved everything neatly in a folder. I didn’t see a single line of Swift or Xcode. The AI handled all the technical details, from motion data parsing to animation logic. When I ran the compiled app, it worked flawlessly on the first try. No errors, no crashes. This experience showed me that no-code app development is not just a buzzword — it’s a practical reality.

The Calibration Process: Simple and Intuitive

Launching the app, it asked me to sit upright for a few seconds to record my “good posture.” Then, I slouched forward to capture the “bad posture.” The app used the AirPods’ gyroscope and accelerometer data to distinguish between the two. No manual inputs needed. Once calibrated, the app runs silently in the menu bar. When I sit straight, the icon stays grey. If I start slouching, it turns yellow, then red. After 12 seconds of poor posture, a notification pops up with a warning chime.

I tested the app with friends using second-gen AirPods Pro. They were surprised by the accuracy. The motion sensing was responsive, and the alerts felt helpful, not annoying. This confirmed that AirPods posture tracking is a viable alternative to camera-based systems.

Privacy First: On-Device Processing Keeps Data Safe

Privacy was my primary motivation. Many health apps upload data to cloud servers, exposing sensitive information to third parties. My app processes everything locally on the Mac. No data ever leaves the device. The AirPods sensors communicate via Bluetooth, and all analysis happens on-device. This approach eliminates the risk of data leaks or unauthorized access.

For anyone concerned about on-device health privacy, this is a game-changer. You don’t need to trust a developer’s privacy policy. You control the software entirely. If you want, you can even keep the app to yourself — never publishing it to an app store. This is the ultimate form of data sovereignty.

The Limitations of No-Code App Development

While the experience was empowering, I must be realistic. Building a personal utility is one thing; launching a commercial app is another. To publish on the App Store, you need a developer account, navigate Apple’s review process, and handle updates. For now, I have no plans to release this app publicly. The goal was to prove that build a Mac app with AI is possible for non-coders.

Tools like Claude excel at generating functional prototypes, but they have limits. Complex integrations (e.g., connecting to external APIs or payment systems) still require technical knowledge. However, for personal projects or internal tools, the barrier has never been lower. As AI coding assistants improve, the gap between idea and execution will shrink further.

What This Means for the Future of Software Creation

This experiment changed my perspective. I no longer feel helpless when a desired app doesn’t exist. Instead of waiting for a developer, I can prompt an AI to build it. The era of no-code app development is here, and it’s accessible to anyone with a clear idea and a willingness to experiment. Whether you want a posture tracker, a habit reminder, or a custom dashboard, the tools are ready.

For more insights on leveraging AI for productivity, check out our guide on AI productivity tools for Mac users. If you’re curious about other no-code solutions, read our comparison of best no-code platforms for beginners.

In the end, I built a Mac app with AI that solves a real problem — without writing a single line of code. If I can do it, so can you. The only limit is your imagination.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago