Google Responds to Chrome’s Silent Gemini Nano Install, Sidesteps Consent Issue

Google has finally broken its silence on the growing controversy surrounding Chrome’s automatic download of a 4GB AI model. However, the company’s response leaves a critical question hanging: why did it install the Gemini Nano model without asking users first?

Parisa Tabriz, Google’s Vice President and General Manager for Chrome, took to social media to address the backlash. She framed the Gemini Nano auto-install as a core part of Chrome’s security and developer roadmap. Yet, privacy advocates argue that the lack of explicit consent violates both user trust and European law.

How the Gemini Nano Auto-Install Sparked Outrage

The controversy erupted after privacy researcher Alexander Hanff documented Chrome’s behavior in detail. He discovered that the browser silently downloads the Gemini Nano model—roughly 4GB in size—onto compatible devices without any prompt. Even more troubling, manually deleting the file triggers an automatic re-download upon the next browser restart.

This means that users are stuck with a large file consuming storage and bandwidth, whether they want it or not. The situation worsened when critics noticed a glaring inconsistency: Chrome’s new “AI Mode” in the address bar does not even use the local model. Instead, it sends queries to Google’s cloud servers. As a result, users absorb the cost of a 4GB download that has no connection to the browser’s most visible AI feature.

Privacy advocates have also flagged potential violations of the EU’s ePrivacy Directive, which requires user consent before storing data on a device. The Chrome AI model download appears to bypass this requirement entirely.

Google’s Defense: Security and Developer APIs

In a series of posts on X, Tabriz acknowledged the concerns but stopped short of apologizing or offering a clear opt-in mechanism. She explained that Google has been offering Gemini Nano in Chrome since 2024 as a lightweight, on-device model. According to her, it is central to Chrome’s developer APIs and security features, including scam detection.

“On-device AI is core to our developer and security strategy,” Tabriz wrote. She emphasized that the model processes data locally rather than sending it to Google’s servers, which theoretically enhances privacy. She also noted that the model automatically uninstalls when a device is low on storage.

However, Tabriz did not address the consent question directly. She also failed to explain why the model reinstalls itself after a user deletes it. Google has separately stated that users can disable and remove the model through Chrome’s settings, and that once disabled, it will not re-download. But this requires users to know about the setting in the first place.

Privacy Implications of Silent AI Downloads

The Google Gemini Nano privacy debate highlights a broader tension between convenience and consent. On-device AI models can improve user experience by enabling faster, offline features. However, forcing a 4GB download without notice raises serious questions about user autonomy.

For European users, the issue is particularly acute. The ePrivacy Directive mandates that any storage of information on a user’s device requires prior consent. By automatically downloading the Gemini Nano model, Chrome may be in violation of this law. Privacy advocates argue that Google’s response fails to address this legal risk.

Building on this, the re-download behavior is especially concerning. If a user actively removes the file, they are signaling a clear preference. An automatic re-download undermines that preference and could be seen as a form of digital coercion.

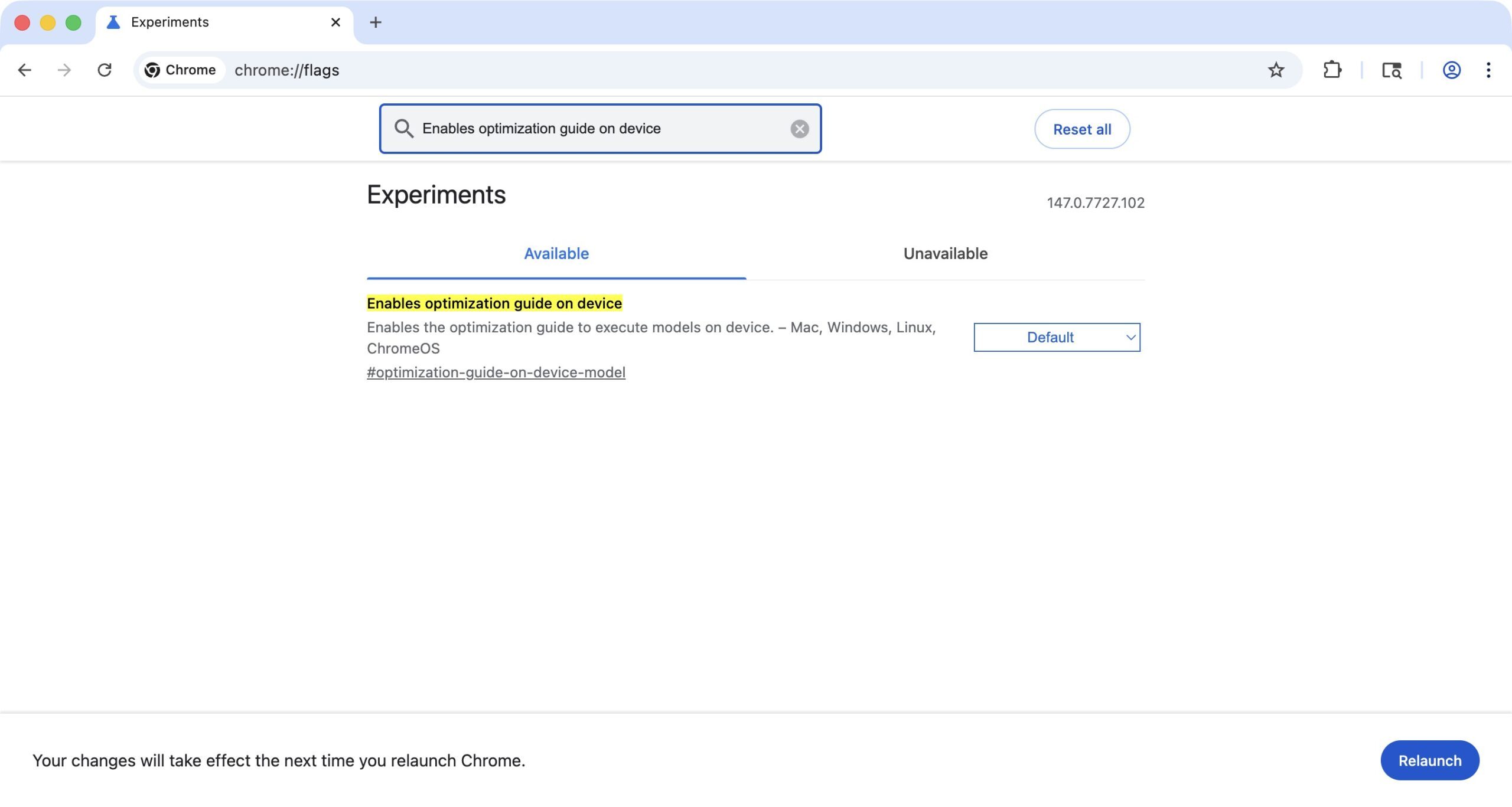

How to Disable the Gemini Nano Model

For users who want to take control, Google has provided a way to disable the feature. Navigate to Chrome’s settings, then to the “AI and privacy” section. From there, you can toggle off the Gemini Nano model. Once disabled, it should not re-download. For a step-by-step guide, check out our article on how to disable Gemini Nano in Chrome.

Alternatively, you can manage your browser’s storage settings to prevent automatic downloads. For more tips on protecting your privacy, read our guide on Chrome privacy settings every user should know.

What This Means for the Future of On-Device AI

The Chrome silent install backlash may force Google to rethink its approach. As AI becomes more integrated into browsers, the line between helpful features and intrusive practices will blur. Companies like Google must balance innovation with transparency.

Tabriz’s response suggests that Google views on-device AI as non-negotiable for Chrome’s future. However, the company’s reluctance to address consent directly could erode user trust. Moving forward, clearer communication and opt-in mechanisms will be essential.

In conclusion, while Google has explained the rationale behind the Gemini Nano auto-install, it has not fully resolved the privacy concerns. Users who value control over their devices should take proactive steps to manage these settings. The debate is far from over, and regulators may yet have the final word.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago