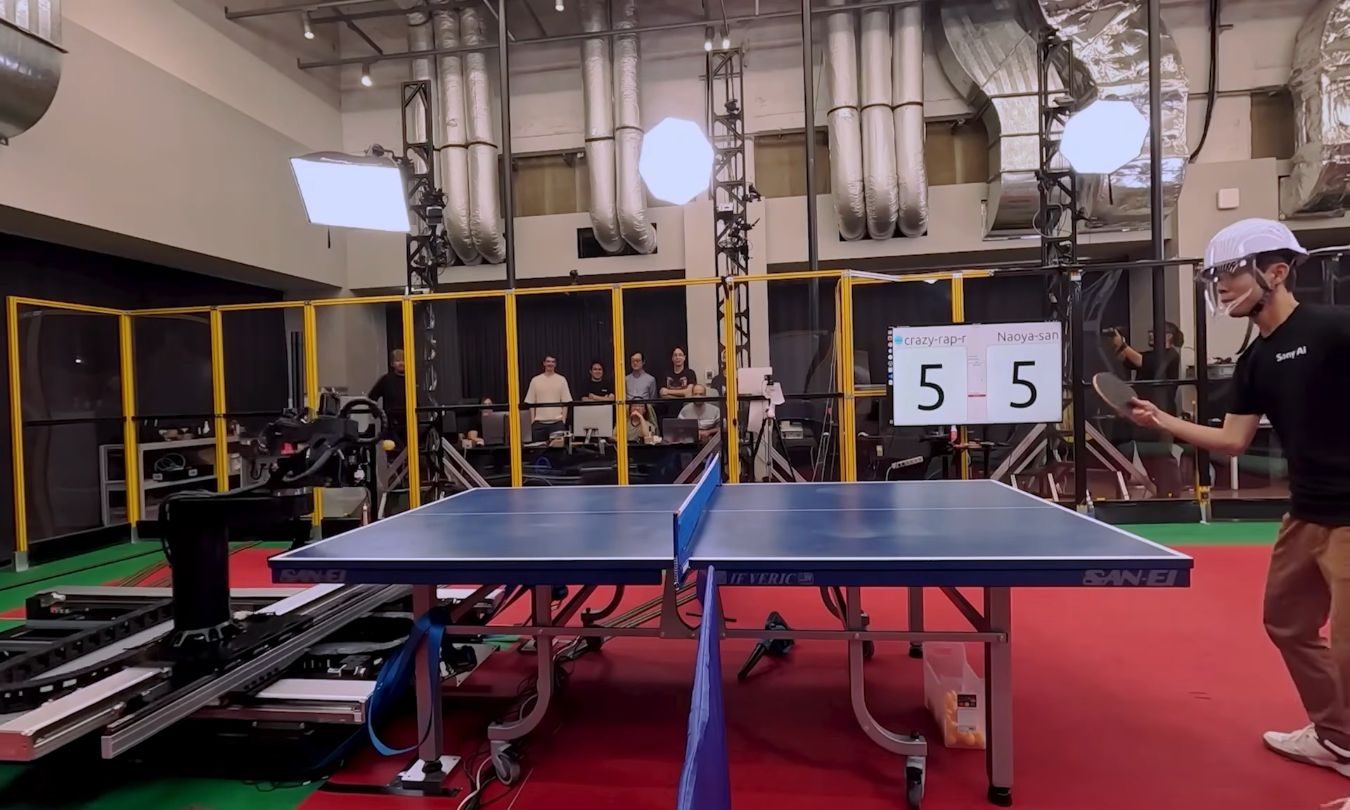

Sony’s table tennis robot made me think about what happens when AI gets a body

I wanted to dismiss Sony’s table tennis robot as another expensive lab flex. A machine that can rally against elite players is impressive, sure, but it also sounds like the kind of demo built to make executives clap in a room where everyone already agreed to be impressed.

But table tennis is a nastier test than it looks. The ball is small, fast, spinning, and rude enough to change direction the moment it hits the table. Sony’s system faces something less forgiving than calculation. It has to see, predict, and act before the point is gone.

The challenge of embodied AI: why Sony’s robot matters

Sony tested Ace against five elite players and two professionals under official competition rules, and the robot came away with several wins. The more useful detail is what it had to handle during those matches: fast, high-spin shots that change direction after the bounce and punish even small delays. In plain English, Ace wasn’t just hitting the ball back. It was reading motion, making a prediction, and moving before the rally escaped it.

This is where the Sony table tennis robot transcends a simple sports demo. It becomes a case study in embodied AI — intelligence that must operate in the physical world, not just on a screen. Explore more AI robotics news.

AI is leaving the board

The usual “AI beats human” headline undersells what Ace is actually testing. We’ve already seen that story in cleaner arenas. IBM’s Deep Blue beat Garry Kasparov in 1997, and the symbolism still hangs over every old contest between human skill and machine calculation.

But chess, for all its strategic depth, is polite to computers. The board doesn’t wobble. The pieces don’t spin. A knight never comes screaming back at 60 miles per hour because someone clipped it at a nasty angle.

Sony’s robot points to a different shift. When AI has to move, intelligence becomes a timing problem. The system has to read the world quickly enough to act inside it. That’s more useful, and much harder to keep neatly boxed in.

How the body changes the problem for AI

This is where the table tennis demo starts doing more work. A robot that can track spin, predict motion, and adjust its response in real time isn’t automatically a factory worker, warehouse picker, nurse assistant, farmhand, or disaster-response machine. That leap would be too neat, which usually means it’s wrong.

The broader robotics market is already well past the cute-demo stage. The International Federation of Robotics says 542,000 industrial robots were installed in 2024, more than double the figure from a decade earlier. It expects installations to reach 575,000 in 2025 and pass 700,000 by 2028. That doesn’t make Ace a factory product, but it does make it part of a bigger automation story that’s already showing up on production floors.

On controlled industrial floors, robots need to handle variation instead of repeating one perfect motion forever. In logistics, they face crushed boxes, bad angles, missing labels, and people walking through the wrong lane at the worst possible time. Outdoors, mud, weather, uneven ground, and produce shaped by nature aren’t known for respecting software requirements.

The labor side of embodied AI

The labor side is where the story gets less cute. McKinsey estimates that today’s technology could theoretically automate activities accounting for about 57% of current US work hours. That isn’t a clean jobs-lost number, and McKinsey is careful about that point.

The pressure is subtler and probably messier: tasks get split apart, roles get redesigned, and some workers discover that “efficiency” has a habit of arriving with a spreadsheet and a forced smile. Read more about the future of work and automation.

Some settings raise the penalty for being wrong. A chatbot that gets something wrong can waste an afternoon. A robot that misreads a patient’s balance, a wheelchair, or a hospital hallway can do real damage. The more embodied AI becomes, the less forgiving its mistakes get.

The bill comes with the body: infrastructure costs

The infrastructure doesn’t disappear when AI gets legs, wheels, or a robot arm. It still depends on chips, data centers, cooling systems, electricity, water, and a grid that wasn’t built around every company suddenly discovering it needs more compute.

The International Energy Agency expects global data center electricity consumption to double to around 945 TWh by 2030, representing just under 3% of global electricity consumption. That share may sound small until a local grid, a water system, or a community near a new data center has to absorb the concentration.

It’s not all grim though. Smarter robots could reduce factory waste, help inspect dangerous sites, improve precision agriculture, and take on work that breaks human bodies for a living. The upside is real, but so is the cost.

Deep Blue made AI feel powerful inside a board game. Ace makes it feel like the board is gone, and the pieces are now factories, hospitals, farms, grids, and workers trying to guess what happens next.

Asimov imagined robots bound by rules. The version we’re actually building may be bound first by economics. Check out the latest robotics trends for 2025.

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

Social Media3 months ago

Social Media3 months ago

Infosecurity3 months ago

Infosecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

CyberSecurity3 months ago

How To2 months ago

How To2 months ago