Google Gemini’s Next Leap: Reading Your Emails and Calendar to Act Before You Ask

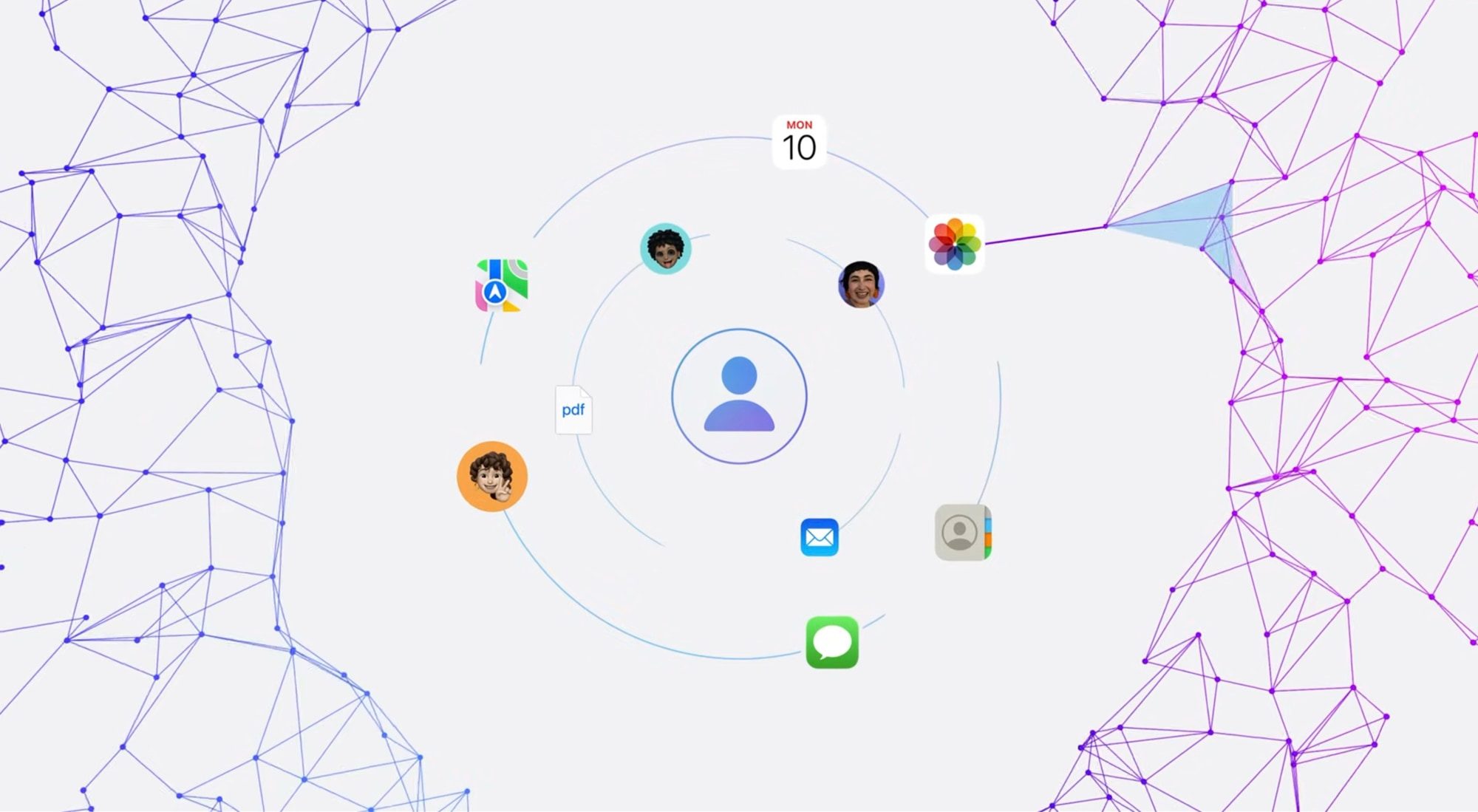

Imagine an assistant that doesn’t wait for you to say its name. Instead, it scans your inbox, checks your schedule, and offers help before you even realize you need it. That’s exactly what Google Gemini is aiming to deliver with a newly discovered feature called Proactive Assistance. According to a deep dive into the latest Google app beta by 9To5Google, the code reveals a system designed to anticipate your needs—without you lifting a finger.

This marks a significant shift in how we interact with AI. Instead of reactive commands, Gemini will soon offer proactive suggestions based on what it learns from your digital life. But how does this work, and what does it mean for your privacy? Let’s break it down.

How Gemini Proactive Assistance Works

The core idea behind Gemini Proactive Assistance is simple: the AI monitors your apps and triggers helpful actions automatically. During initial setup, you choose which services Gemini can access. Gmail and Google Calendar are the primary examples, but the feature also extends to incoming notifications and on-screen content—only if you grant permission.

For instance, if you have a meeting scheduled, Gemini might proactively send a notification with a practice quiz generated by its AI. Google actually demonstrated this exact scenario at I/O 2025, showing how the assistant noticed a test on your calendar and offered help without being asked. It’s a glimpse of a future where your phone becomes a true personal assistant.

What About the Daily Brief?

Interestingly, the previously named “Your Day” feed has been rebranded as “Daily Brief.” This is likely the first visible component of the broader Proactive Assistance rollout. Think of it as a morning digest that adapts based on your schedule, emails, and priorities—all without manual input.

Building on this, the feature isn’t just about calendar events. It can also read your notifications to identify urgent tasks or important messages. However, all of this happens only with your explicit consent, which brings us to a crucial topic: privacy.

Privacy: Does Gemini Send Your Data to the Cloud?

One of the most reassuring aspects of Gemini Proactive Assistance is its commitment to on-device processing. According to the beta code, everything the assistant accesses is handled in a private, encrypted environment directly on your phone. None of this data feeds into Google’s AI model training or leaves your device.

This means that while Gemini is reading your emails and calendar, it’s doing so locally. The AI learns your patterns without sending sensitive information to the cloud. For users concerned about privacy, this is a significant win. It’s a model that balances convenience with control—a rare combination in the world of AI assistants.

However, it’s worth noting that this feature is still in beta. The information comes from an APK teardown, meaning Google hasn’t officially confirmed the details. So while the potential is exciting, we should temper expectations until an official announcement.

What This Means for the Future of AI Assistants

Proactive Assistance represents a fundamental shift in how AI interacts with users. Instead of waiting for commands, the assistant learns your habits and offers help at the right moment. This could include sending reminders based on email threads, suggesting responses to calendar invites, or even preparing summaries of unread notifications.

As a result, the line between reactive and proactive AI is blurring. Google Gemini is positioning itself as a tool that understands context—not just words. For example, if you receive an email about a flight delay, Gemini might automatically suggest rescheduling your calendar appointments. This level of integration could save time and reduce cognitive load.

On the other hand, this raises questions about dependency. Will users become too reliant on AI to manage their lives? And how will Google handle edge cases where the AI misinterprets data? These are challenges that the company will need to address as the feature rolls out.

How to Prepare for Gemini Proactive Assistance

If you’re eager to try this feature, keep an eye on the Google app beta updates. Once it’s officially released, you’ll likely see a setup wizard that asks for permissions. Start by granting access to apps you trust, like Gmail and Calendar, and review your notification settings to ensure Gemini can read what’s relevant.

Additionally, consider exploring how to enable Gemini on Android to get familiar with the assistant’s current capabilities. For those who value privacy, remember that you can revoke permissions at any time. The key is to strike a balance between convenience and control.

In conclusion, Gemini Proactive Assistance is a bold step toward a more intuitive AI. By reading your emails, calendar, and notifications, it aims to help you before you even ask. While privacy safeguards are encouraging, the feature’s success will depend on how well it understands context—and how much trust users are willing to place in it.

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Infosecurity2 months ago

Infosecurity2 months ago

Social Media2 months ago

Social Media2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

CyberSecurity2 months ago

Social Media2 months ago

Social Media2 months ago